Category

war

The Tacos of Memory – A poem for Parsha Vayera

Rick Lupert

October 21, 2021

Nation Shall Not…But They Still Do – A poem for Parsha Lech Lecha

Nation Shall Not…But They Still Do – A poem for Parsha Lech Lecha

Rick Lupert

October 14, 2021

Nation Shall Not…But They Still Do – A poem for Parsha Lech Lecha

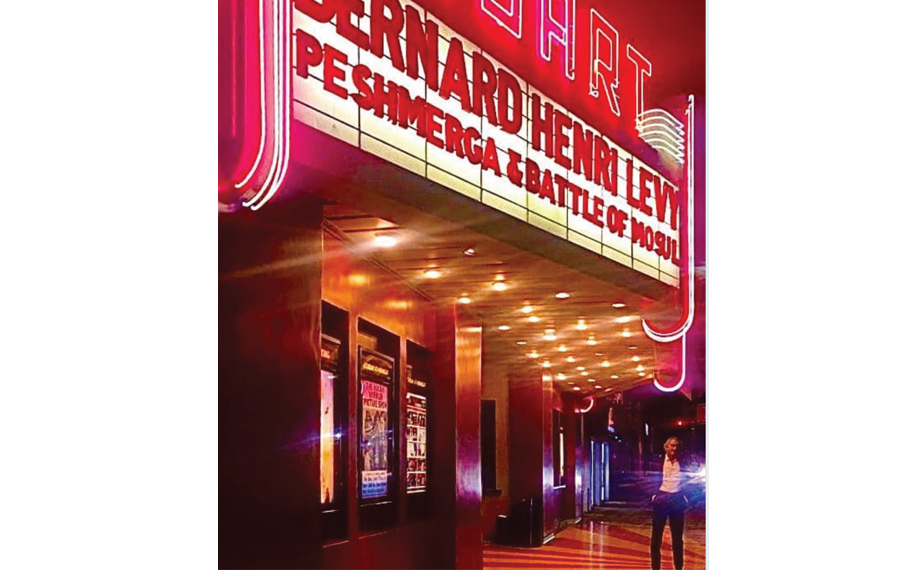

Nuart Retrospective Presents the War Documentaries of Bernard-Henri Lévy

Gerri Miller

January 21, 2020

Moses the Warrior and the Spatula of Destiny – A Poem for Parsha Matot-Masei

Rick Lupert

August 1, 2019

New Articles

If You Heard What I Heard Teams Up with Matisyahu for ‘A Night of Resilience’ Benefit

Kylie Ora Lobell

April 24, 2024

Campus Rioters are not Just Dangerous and Antisemitic. They’re Also Phony.

David Suissa

April 24, 2024

Divining the Future

Morton Schapiro

April 24, 2024

When Hatred Spreads

Dan Schnur

April 24, 2024

More than 300 Clergy Members Sign Letter Asking Columbia University To Protect Jewish Students.

Alan Zeitlin

April 24, 2024

More news and opinions than at a Shabbat dinner, right in your inbox.

More news and opinions than at a Shabbat dinner, right in your inbox.