Category

robots

Should robots count in a minyan? Rabbi talks Turing test

Robots can hold a conversation, but should they count in a minyan?

Hoop, there it is! Milken’s robotics team scores big

When “Sir Lancebot,” the motorized basketball-playing robot built by the Milken Community High School’s robotics team, made its debut appearance at a regional competition in San Diego in early March, the results were not encouraging.

Israeli-built robots shoot for U.S. competition

Forward Omri Casspi made the leap from Israel to the National Basketball Association in 2009, but the latest Israeli hoopsters seeking to compete on American soil aren’t human.

Judea Pearl, father of slain WSJ reporter, is a leader in artificial intelligence

A man arrives at an airport for a flight, and as he goes through security the agent asks some questions.

Science program helps six Milken grads head to MIT

\”One of the things we were really committed to when we started the academy is that kids were not going to fit into the typical box of science classes,\” said Jason Ablin, Milken\’s head of school.

VIDEO: Woody Allen and the Jewish robots (from ‘Sleeper’)

Woody Allen is fitted for a new suit by robot Jewish tailors. Ginsberg & Cohen, Computerized Fittings, Since 2073. From \’Sleeper\’

New Articles

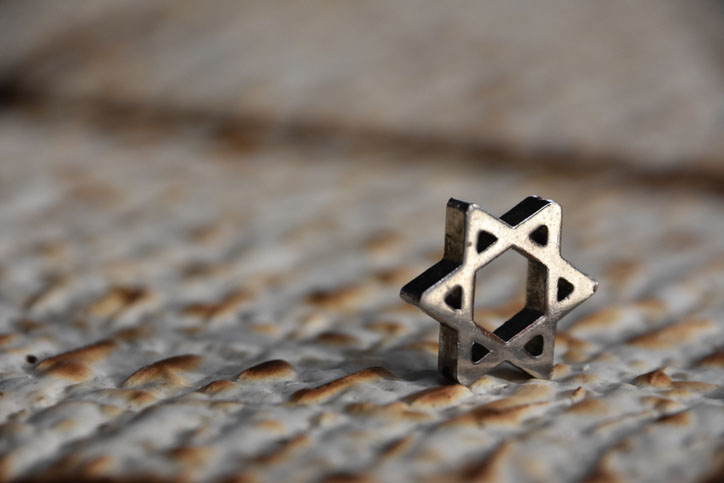

Shabbat HaGadol – Redeeming Dibbur – Voice and Speech of God

Ha Lachma Anya

Passover 2024: The Four Difficulties

Israel Strikes Deep Inside Iran

More news and opinions than at a Shabbat dinner, right in your inbox.

More news and opinions than at a Shabbat dinner, right in your inbox.